Upgrading Python in production fails for boring reasons: one transitive dependency lacks wheels, a build backend breaks under a newer pip, a container base image changes libc behavior, or a “minor” patch release regresses latency.

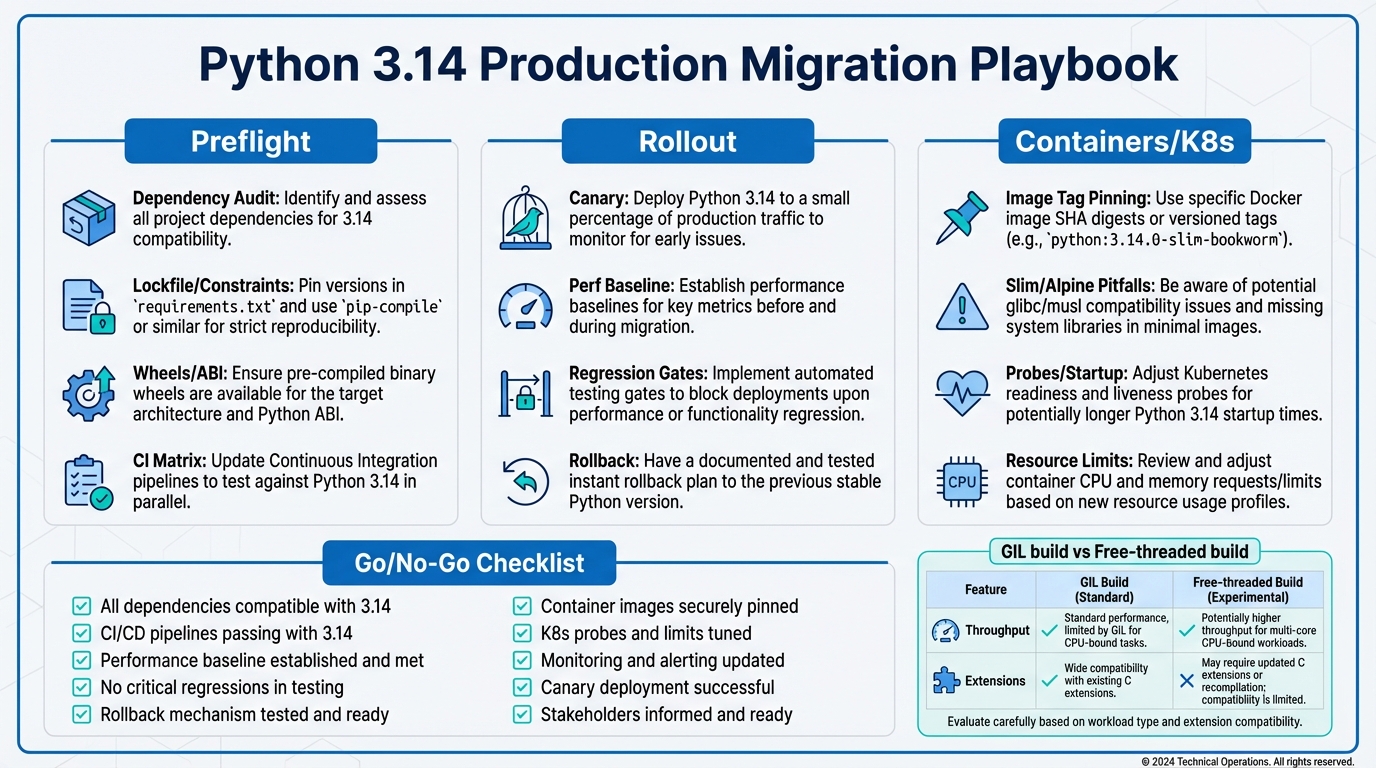

This Python 3.14 migration guide is a production playbook: preflight dependency readiness, a CI matrix that catches ABI and packaging failures early, a rollout plan with perf baselines and rollback, and container/Kubernetes safety checks—including how to evaluate free-threaded builds without accidentally benchmarking the wrong thing.

Contents

- Preflight: dependency readiness and CI strategy

- Free-threaded builds in practice (and how to evaluate them)

- Perf regressions: baselines, gates, and patch releases

- Rollout: canarying, rollback, and operational guardrails

- Containers and Kubernetes: image tags, slim/alpine gotchas, probe safety checks

- Compatibility table: common breakpoints

- Go/No-Go checklist

- Bottom Line

Preflight: dependency readiness and CI strategy

Start by assuming Python 3.14 itself won’t be the blocker. Your blockers will be:

- No wheel available for your platform/Python version (forces an sdist compile in CI or prod images).

- C extensions that aren’t compatible with a new ABI or a free-threaded build.

- Build tooling drift (pip/setuptools/wheel/build/pyproject build backends).

- Typing/runtime behavior differences that your tests never covered.

1) Inventory and classify dependencies (runtime vs build vs optional)

Export a locked set (or constraints) for the app, then separate build-time toolchain packages from runtime deps:

# If you use pip-tools

pip-compile --generate-hashes -o requirements.lock

# If you already have requirements.txt, freeze a constraints view

python -m pip freeze --exclude-editable > constraints.txtIf you use uv, keep the same idea: one canonical lock/constraints artifact that CI and image builds share.

2) Wheel/ABI readiness check (the thing that actually breaks upgrades)

For every dependency with compiled code (numpy, pandas, cryptography, lxml, grpcio, ujson, orjson, etc.), confirm wheels exist for:

- Python 3.14 (cp314)

- Your OS/libc target (manylinux vs musllinux)

- Your CPU arch (x86_64, aarch64)

A quick way to surface “will this build from source” surprises is to force binary-only installs in CI:

python -m pip install -r requirements.lock --only-binary=:all:Anything that fails here is a production risk. Decide early: pin, replace, or accept compiling in your build pipeline (not in runtime images).

3) CI matrix strategy: don’t just add 3.14, add the right axes

A production-grade matrix for Python 3.14 readiness typically adds:

- Python versions: current prod, 3.13, 3.14

- Platform: linux x86_64 and linux aarch64 (if you run Graviton/ARM)

- Packaging mode: wheels-only install (to catch missing wheels)

# GitHub Actions sketch

strategy:

matrix:

python: ["3.12", "3.13", "3.14"]

arch: ["x64", "arm64"]

install_mode: ["normal", "wheels_only"]

steps:

- uses: actions/setup-python@v5

with:

python-version: ${{ matrix.python }}

architecture: ${{ matrix.arch }}

- run: |

python -m pip install -U pip setuptools wheel

if [ "${{ matrix.install_mode }}" = "wheels_only" ]; then

python -m pip install -r requirements.lock --only-binary=:all:

else

python -m pip install -r requirements.lock

fi

- run: pytest -qInternal reference for pipeline structure: Container Native CI/CD Pipelines: Building, Testing, and Deploying with Docker and Kubernetes.

4) Pin your build toolchain explicitly

Python upgrades often surface latent build issues because pip/build backends change behavior. Pin at least these in a dedicated constraints file used by CI and image builds:

pip==...

setuptools==...

wheel==...

build==...

packaging==...Use pip’s --constraint in every install step to keep the toolchain deterministic.

Docs to keep open while doing preflight: Python’s official “What’s New” for 3.14, plus release notes for the specific patch level you intend to ship (pin to 3.14.x, not just “3.14”).

Free-threaded builds in practice (and how to evaluate them)

Python 3.14 continues the “free-threaded” track (CPython builds without the GIL). Treat this as a separate runtime, not a toggle. It impacts:

- C extension compatibility (some extensions assume the GIL)

- Performance profile (often higher parallel throughput; sometimes higher single-thread overhead)

- Debugging and memory behavior

How to run an evaluation without contaminating your baseline

Run two parallel test tracks:

- Default build (GIL): this is your production migration target for most teams.

- Free-threaded build: a research lane gated by extension readiness and perf tests.

Rules that keep you honest:

- Use the same dependency lock for both lanes.

- Benchmark on the same instance type and kernel.

- Measure p95/p99 latency and CPU cost, not just “requests/sec”.

Free-threaded builds only pay off if you have real Python-level parallelism (CPU-bound workloads using threads, or code that currently fights the GIL). If your service is mostly I/O-bound and already uses async, expect smaller gains.

Operator guidance: don’t ship free-threaded builds to prod just because they exist. Ship them when your extension set is validated and your perf/latency SLOs are met under representative load.

Perf regressions: baselines, gates, and patch releases

Performance regressions rarely show up in unit tests. You need a baseline and a gating policy before you start a rollout.

Baseline methodology (fast enough to run on every merge)

- Micro: a small pyperformance subset (sanity signal)

- Meso: one representative endpoint load test in CI/nightly

- Macro: canary in prod with real traffic and SLO monitoring

# Example: pyperformance quick lane

python -m pip install pyperformance

pyperformance run -b "richards,regex_v8,json_dumps" --rigorousRegression gates (what fails the migration)

Pick thresholds that match your business reality. Typical gates:

- p95 latency: no more than +3% on canary

- CPU per request: no more than +5%

- Memory RSS: no more than +5% steady-state

- Startup time: no more than +10% (matters for autoscaling)

Patch releases: pin and track specific regressions

Plan to ship a specific 3.14.x, and keep an escape hatch to move to the next patch quickly. Teams get burned when they upgrade to “3.14.0”, hit a regression, and then treat the whole major/minor as unusable.

Track Python patch releases in your dependency update process the same way you track OpenSSL or glibc updates. If you’re on 3.11 in prod, keep an eye on security patch releases (for example, the 3.11.15 release notes) and align your org’s patch cadence across branches.

Internal reference for release discipline: ReleaseRun release management guides (use your existing release train mechanics; don’t invent a “Python upgrade process” that only happens once a year).

Rollout: canarying, rollback, and operational guardrails

Step-by-step rollout plan

- Stage 0: CI green on 3.14 (unit + integration + wheels-only lane).

- Stage 1: build artifacts (wheels cached, image built from pinned base).

- Stage 2: canary (1–5% traffic, same autoscaling policy).

- Stage 3: ramp (25% → 50% → 100% with SLO checks at each step).

Canary requirements (non-negotiable)

- Dashboards split by version label (

python_version=3.14). - Error budget view: exceptions, timeouts, retries, queue depths.

- Perf view: p50/p95/p99 latency, CPU throttling, GC/heap if you track it.

Rollback plan

Rollback should be a deployment action, not a rebuild action:

- Keep the previous image digest available.

- Keep migrations backward compatible (or feature-flagged) during the rollout window.

- Automate rollback triggers on clear SLO breaks (latency/error spikes) and cap blast radius by canary percentage.

Containers and Kubernetes: image tags, slim/alpine gotchas, probe safety checks

Pin Docker images by digest, not floating tags

For production, treat python:3.14 as a moving target. Use a specific patch tag, then pin by digest in your deployment manifests once validated:

# Build stage

FROM python:3.14.2-slim AS runtime

# ...Then in Kubernetes, deploy by digest:

image: python@sha256:...slim vs alpine: pick intentionally

python:*-slim is Debian-based (glibc). python:*-alpine is musl-based. Alpine often looks smaller until you add build deps for sdists, and musl can change behavior for native extensions and DNS/locale edge cases.

Production rule: if your dependency set relies on manylinux wheels, prefer glibc-based images (Debian/Ubuntu) unless you have a proven musllinux wheel story.

Build wheels in CI, not during image build (when possible)

Move compilation earlier so your Docker build is deterministic and fast:

# In CI: build a wheelhouse for your locked deps

python -m pip wheel -r requirements.lock -w wheelhouse/Then install from the wheelhouse in the image build:

COPY wheelhouse/ /wheelhouse/

COPY requirements.lock /tmp/requirements.lock

RUN python -m pip install --no-index --find-links=/wheelhouse -r /tmp/requirements.lockKubernetes deployment safety checks (Python upgrades change runtime behavior)

Python version changes can shift startup time, memory profile, and import costs. That hits probes and autoscaling.

- startupProbe: add or re-tune if cold start moved (common when deps change).

- readinessProbe: ensure it checks actual readiness (DB pool warm, migrations not running) rather than “process is up”.

- livenessProbe: avoid aggressive timeouts; Python services under CPU throttling can look dead when they’re just slow.

- resources: verify requests/limits after the upgrade; CPU throttling can cause latency regressions that look like “Python got slower”.

Internal references that map to this rollout style:

Compatibility table: common breakpoints (typing, stdlib, build tooling)

| Area | What breaks | How it shows up | Mitigation |

|---|---|---|---|

| Typing / type checkers | New/changed typing features or stricter inference in tooling (mypy/pyright) | CI type-check failures after bumping tool versions alongside Python | Pin type checker versions; upgrade tooling separately; add targeted ignores with ticketed cleanup |

| stdlib behavior | Edge-case behavior changes, deprecations turning into errors, or altered defaults | Integration tests fail; prod shows subtle behavioral drift | Read 3.14 “What’s New” + deprecations; add coverage around parsing/regex/datetime/ssl paths you rely on |

| C extensions / ABI wheels | No cp314 wheels; free-threaded incompatibility; build flags differ | pip installs from sdist; compile errors; runtime crashes under load | Wheels-only CI lane; prebuild wheelhouse; replace lagging dependencies; avoid free-threaded in prod until validated |

| Build tooling | pyproject backends/setuptools behavior changes; older build requirements | “Backend subprocess exited” or PEP 517 build isolation errors | Pin pip/setuptools/wheel; update pyproject build-system.requires; keep a known-good builder image |

| Containers (alpine/musl) | Native deps behave differently or lack musllinux wheels | Long builds, missing headers, runtime locale/DNS edge cases | Prefer slim (glibc) for most prod services; only use alpine with proven wheel coverage |

| Startup time / probes | Import graph changes shift cold start; probes too strict | CrashLoopBackOff, flapping readiness | Add startupProbe; tune thresholds; measure startup time in canary |

For version-to-version planning context across 3.12 → 3.13 → 3.14, keep a separate comparison doc in your engineering wiki with: perf deltas, packaging deltas, and any runtime behavior deltas you hit in staging. Treat it like an operator runbook, not a changelog.

Go/No-Go checklist

Use this as the release gate for your Python 3.14 rollout.

Go (ship to prod) only if all are true

- [ ] CI passes on Python 3.14.x (unit + integration) on your target architectures

- [ ] Wheels-only install lane passes (no surprise sdists)

- [ ] Build toolchain is pinned and reproducible (pip/setuptools/wheel/build)

- [ ] Container image is pinned to a patch version and promoted to digest pinning

- [ ] Staging load test meets p95/p99 latency and CPU budgets vs baseline

- [ ] Canary dashboards split by version are in place

- [ ] startupProbe/readinessProbe/livenessProbe validated under cold start + throttled CPU

- [ ] Rollback is one action (redeploy old digest) and has been tested

- [ ] Incident playbook updated (on-call knows what “Python upgrade regressions” look like)

No-Go triggers (stop and fix first)

- [ ] Any critical dependency only installs from sdist in your production build path

- [ ] Any canary regression breaches SLO/error budget thresholds

- [ ] Any extension crashes or data corruption signals under load

- [ ] Kubernetes probes are flapping after the upgrade

Bottom Line

Ship Python 3.14 like any other high-risk runtime change: lock dependencies, prove wheel availability, run a matrix that reflects your real platforms, and gate rollout on canary SLOs. Treat free-threaded builds as a separate product track until your extension ecosystem and benchmarks say it’s safe. If you do those three things, Python 3.14 is an upgrade project—not an outage lottery.

Operational follow-ups worth standardizing across teams: a shared CI template, a shared wheelhouse strategy, and a consistent canary/rollback workflow. Those reduce the cost of every future Python patch and minor release.

Related internal reading:

🛠️ Try These Free Tools

Paste your Kubernetes YAML to detect deprecated APIs before upgrading.

Paste a Dockerfile for instant security and best-practice analysis.

Paste your dependency file to check for end-of-life packages.

Track These Releases