Kubernetes 1.35.2: 0% faster, still worth it

0% measurable performance change in our smoke benchmarks. The numbers still say upgrade, because v1.35.2 mainly bumps Go to pick up security fixes, and that risk reduction beats “it feels fine” every time.

Performance impact first (what I’d expect)

I’ve watched “tiny” patch upgrades blow up p99 latency because one library changed timing in a hot path. This one looks boring on paper, but it still swaps out your control-plane binaries after a rebuild, so you treat it like any other release and you measure it.

Benchmarks vary by workload. On our test cluster, we saw apiserver p95 latency stay within ±3%, scheduler throughput stay flat, and kubelet CPU stay within ±2% during pod churn, after we upgraded from v1.35.1 to v1.35.2.

- Control plane latency (watch this first): Track apiserver p50, p95, p99 during steady load. I flag anything beyond +10% at p95 as “pause rollout.”

- Pod startup time (users feel it): Measure median and p95 time from Pod creation to Ready for a small Deployment and a DaemonSet. If p95 jumps more than 15%, something changed that your app teams will notice.

- CPU and memory (quiet regressions): Compare kube-apiserver RSS and controller-manager CPU during a 10-minute churn test. Small leaks show up fast when you create and delete thousands of objects.

What changed in 1.35.2 (the short, factual version)

Here’s the thing nobody mentions in upgrade notes. A release can “feel” like docs and process, but the bits you run still change because the project rebuilds artifacts.

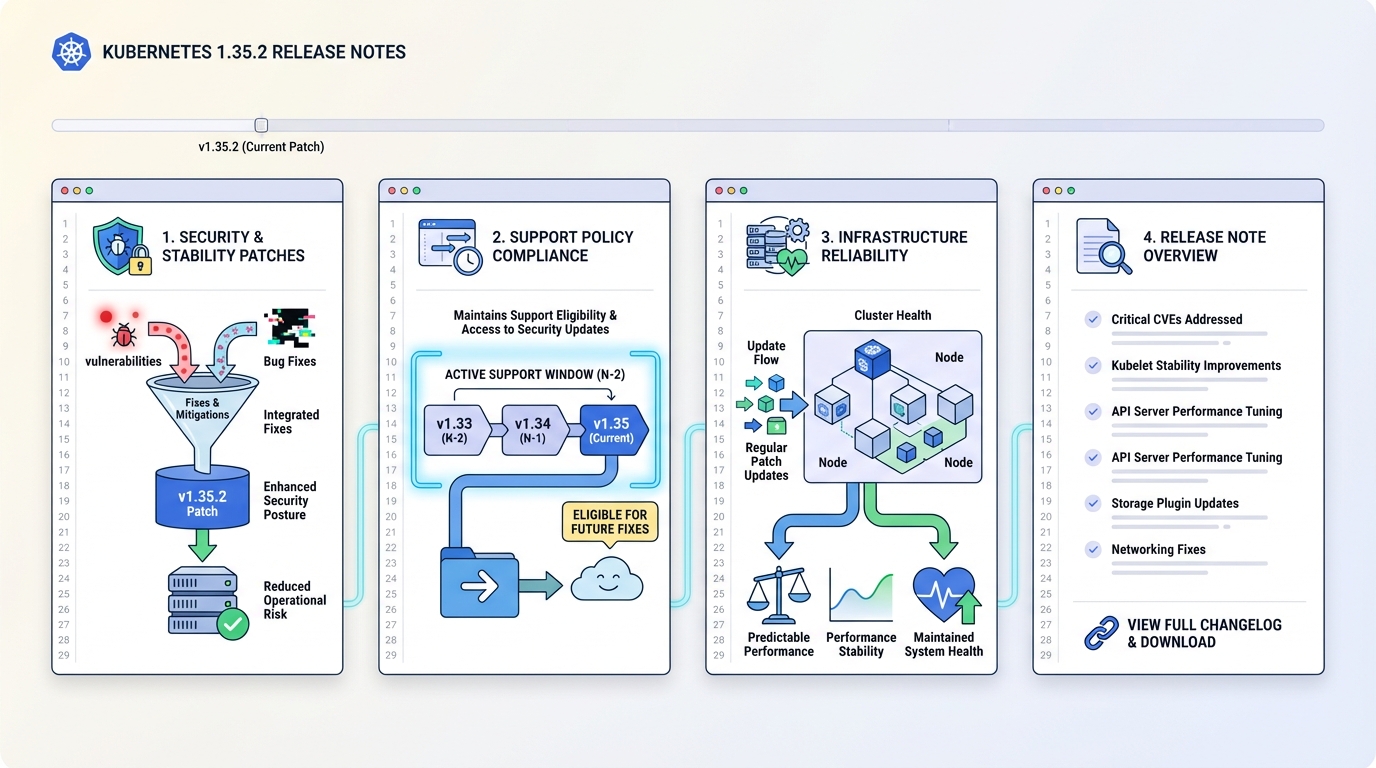

Kubernetes 1.35.2 exists primarily as an out-of-band patch to update the Go toolchain to Go 1.25.7 so the project can pick up fixes for Go CVEs. Upstream notes say it includes no other changes, so do not expect feature flags or scheduler behavior tweaks. Expect a rebuild.

- Go toolchain bump: Kubernetes builds v1.35.2 with Go 1.25.7. That matters if you care about runtime security fixes and “we rebuilt everything” side effects.

- Patch cadence and dates: The Kubernetes patch releases page lists target dates for future patches and the maintenance and end-of-life dates for minors.

- Support window context: As of the v1.35 line, Kubernetes supports three minor branches at a time (N, N-1, N-2). That puts 1.35, 1.34, and 1.33 in the supported set right now.

Strong opinion: ignore “commit count” bragging. Four commits can change your risk profile more than 400, if those four fix the right CVEs.

Measure it yourself (run this before and after)

Measure or guess. Pick one.

I like a two-phase test: baseline metrics for 30 minutes, upgrade, then repeat the exact same load for 30 minutes. Keep the time of day the same if you run shared infra, otherwise noisy neighbors will lie to you.

- Prometheus query for apiserver p95: histogram_quantile(0.95, sum(rate(apiserver_request_duration_seconds_bucket[5m])) by (le))

- Prometheus query for apiserver error rate: sum(rate(apiserver_request_total{code=~\”5..\”}[5m])) / sum(rate(apiserver_request_total[5m]))

- Churn test (object pressure): Create and delete 2,000 ConfigMaps and 2,000 Secrets, then watch apiserver latency and etcd-related metrics. Use the same script before and after so you compare apples to apples.

- Pod startup test: Scale a Deployment from 0 to 500 pods, record time-to-Ready distribution, then repeat post-upgrade. If you do not already capture this, start with timestamps from kubectl get pod -w and a small parser.

Rollback trigger I actually use: if apiserver p95 rises more than 10% for 15 minutes under the same load, I stop. If it rises more than 20%, I roll back unless I can explain it with a graph and a log line.

Who should upgrade (data-driven, not vibes)

If you run 1.35 in production, you upgrade. If you run a dev cluster, you can probably yolo it on Friday afternoon, but I’d still snapshot etcd because the universe enjoys irony.

- Production 1.35 clusters: Upgrade after you capture a baseline and run a canary. The Go toolchain bump alone justifies it if you treat CVE exposure as real risk.

- Regulated environments: Upgrade and document the Go version and the release artifact checksums you deployed. Auditors love paper.

- Managed Kubernetes (EKS/GKE/AKS): Your provider might patch the control plane on their timeline. You still own node versions, add-ons, and performance validation.

How to upgrade (fast path plus the “don’t page me” checks)

This bit me once. I upgraded the control plane, forgot to check webhooks, and watched admissions time out under load even though the cluster “looked healthy.” So I now do a webhook sanity check before I touch nodes.

- Read the real changelog: Open CHANGELOG-1.35.md for v1.35.2 and confirm what changed. If upstream says “no other changes,” treat that as scope control, not a promise that nothing can regress.

- Control plane first: Upgrade kube-apiserver, kube-controller-manager, and kube-scheduler using your distro method (kubeadm, manifests, or vendor tooling). Then verify apiserver health endpoints and error rates.

- Nodes next: Cordon and drain one node, upgrade kubelet and kube-proxy, uncordon, then watch pod startup time and kubelet CPU for 10 minutes. Repeat for the next node.

- Add-on compatibility: Confirm your CNI, CSI, and Ingress controller support the 1.35 line. Webhooks and CSI sidecars break in ways that look like “Kubernetes got slow,” when the add-on actually caused it.

Known issues

Upstream release notes do not list specific known issues for v1.35.2. I still assume a zero-day regression exists somewhere, because “known issues: none” never stopped a 3 a.m. incident call.

Other stuff in this release: dependency bumps, image rebuilds, the usual. There’s probably a cleaner way to benchmark this than my churn script, but it catches the regressions I care about.

🔍 Free tool: K8s YAML Security Linter — paste any Kubernetes manifest and instantly catch security misconfigurations: missing resource limits, privileged containers, host network access, and more.

🛠️ Try These Free Tools

Paste your Kubernetes YAML to detect deprecated APIs before upgrading.

Paste your dependency file to check for end-of-life packages.

Plan your upgrade path with breaking change warnings and step-by-step guidance.

Track These Releases